Early access to Nebius AI is officially unlocked

We at Nebius AI know that GPUs are in great demand and are happy to address this bottleneck with our early access offering. We have just opened access to our AI-centric cloud platform optimized for intensive workloads.

Starting from November 1, you are welcome to explore in detail the products and pricing of the platform. Now you can dive into our comprehensive documentation, a lineup of solutions, pricing specifics, and terms for reserving compute resources.

We’re excited to provide you with top-notch GPUs, including NVIDIA® H100 SXM5, hosted in our own data center in Finland. These are ready to be leveraged right away — no waiting lists. Equipped with 3.2 Tb/s per host InfiniBand interconnection, H100s SXM5 are excellent for dealing with your LLM or generative AI model.

We provide not just GPUs, though, but a training-ready cloud platform. It includes Compute Cloud, Managed Kubernetes, Object Storage with S3 API, network drives, essential security services, and a Marketplace on top of graphic cards.

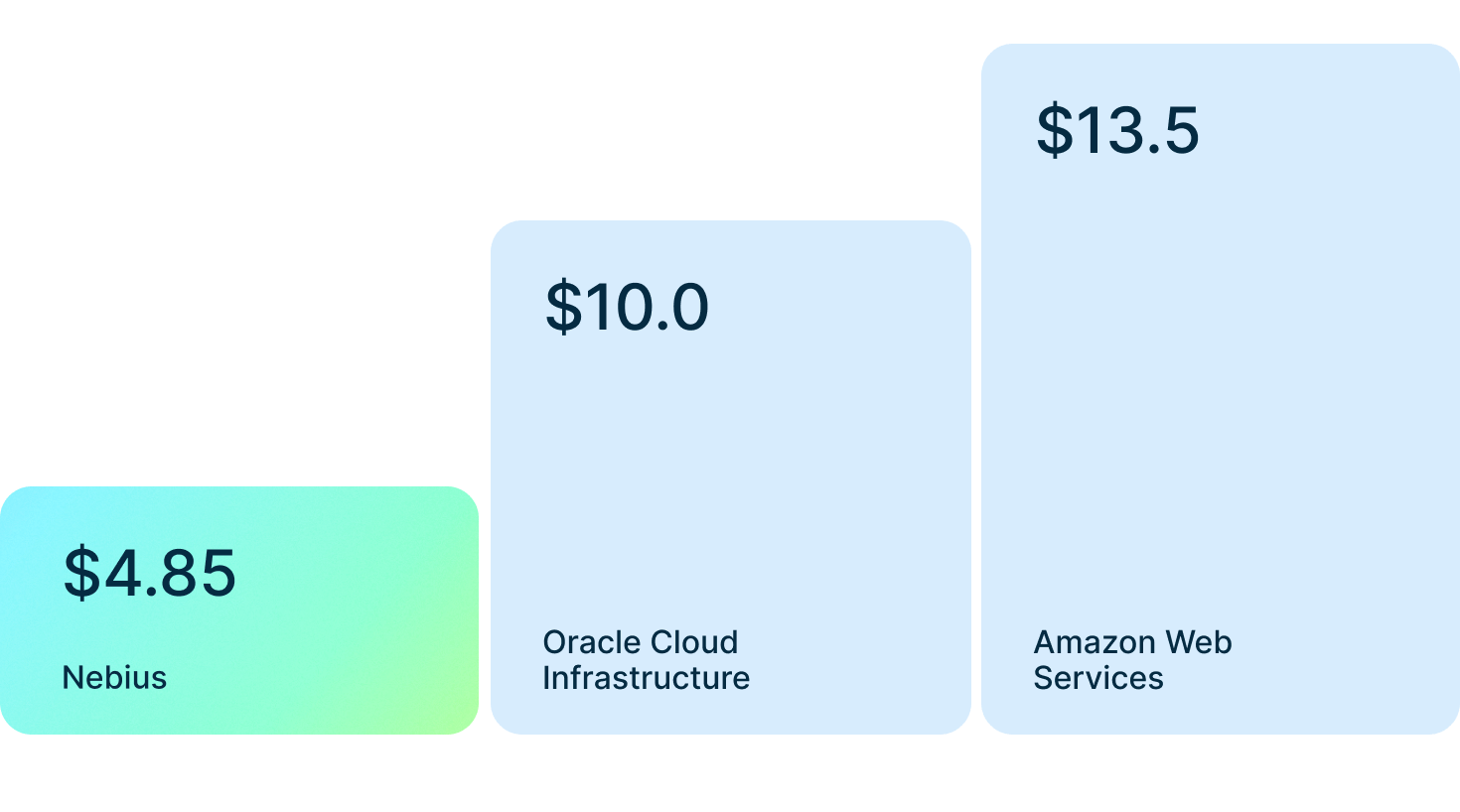

With Nebius AI, you can spend at least 50% less on your GPU compute compared to major public cloud providers. The H100 SXM5 pricing starts at $4.85/hour for a single GPU on a pay-as-you-go mode.

Rates are adjustable depending on GPU consumption and reserve period, with prices dropping to $3.15/hour per GPU for a long-term reserve commitment.

Our platform is easy to manage, but we still want to ensure seamless adoption for your team. That’s why we provide a dedicated engineer to assist you with infrastructure optimization and K8s deployment.

Access to the platform is available with prior confirmation by the Nebius AI team. Just contact us if you need resources for ML workloads.